Jiadong Lu

About Me

Hi! I am currently pursuing a master’s degree in the FASTLab (Fire Group) at the College of Control Science and Engineering, Zhejiang University, under the supervision of Yanjun Cao and Chao Xu.

Previously, I obtained a bachelor’s degree in Automation from Shandong University with the honor of Excellent graduation thesis (top 2%). Currently, I’m preparing to apply for PhD research program.

Research Interests

My research focuses on robotic perception, including ego-state estimation for individual robots and collaborative perception for multi-robot systems. I am particularly interested in developing accurate, real-time, and interpretable state estimation algorithms that enable robots to perceive themselves, localize their teammates, and operate robustly in complex real-world environments.

- Continuous-Time State Estimation: real-time continuous-time SLAM, LiDAR-visual-inertial SLAM

- Multi-Robot Systems: relative state estimation, distributed optimization, air-ground cooperation

News

- [Oct. 2025] Our demonstration about air-ground cooperation is accpeted by IROS 2025 EXPO.

- [Jul. 2025] Our paper about learning-based relative localization is accpeted by IROS 2025.

- [Jul. 2024] Our paper about reconfigurable tracked robot is accepted by IROS 2024.

Publications (Click for a brief introduction)

-

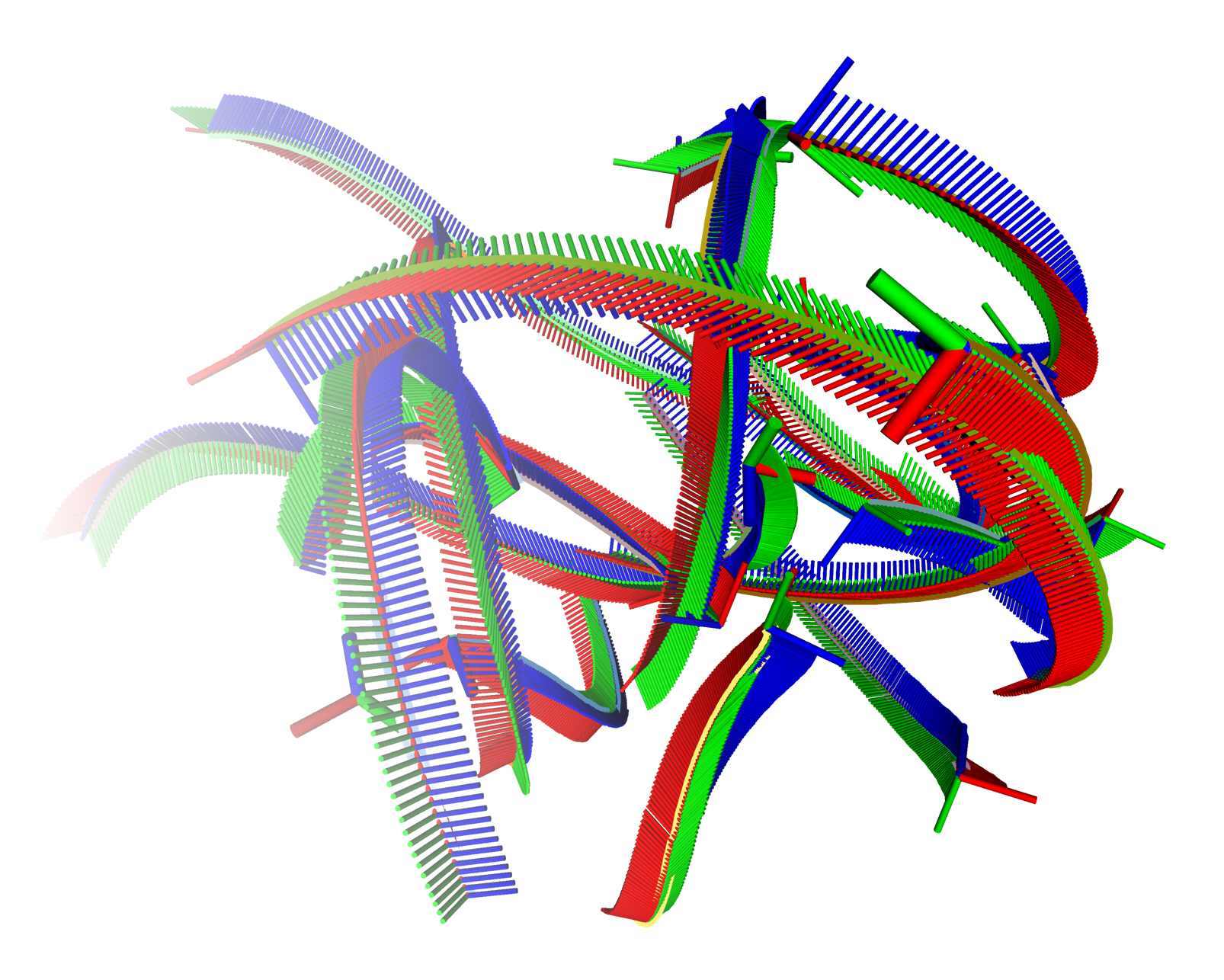

Parallel Continuous-Time Relative Localization with Augmented Clamped Non-Uniform B-Splines

We proposed CT-RIO, which is a continuous-time relative-inertial odometry framework for direct relative localization in multi-robot systems. In our setting, each robot receives inter-robot distance and bearing measurements from UWB and cameras, while IMUs provide high-rate inertial measurements. These measurements are naturally asynchronous, and independent robot clocks can introduce inter-robot time offsets of hundreds of milliseconds. By representing robot motion as continuous-time trajectories, CT-RIO can fuse these asynchronous measurements directly and estimate time offsets using analytic time derivatives of B-splines.

The core of CT-RIO is an augmented Clamped Non-Uniform B-spline (C-NUBS) representation. C-NUBS eliminates the query-time delay of conventional unclamped B-splines and supports closed-form, shape-preserving spline extension and shrinkage. This enables a knot-keyknot strategy, where knots are extended at high frequency while only sparse keyknots are retained for adaptive relative-motion modeling and efficient optimization.

To scale to robot swarms, CT-RIO formulates a sliding-window relative localization problem using only relative kinematics and inter-robot constraints. The tightly coupled optimization is decomposed into robot-wise subproblems and solved in parallel using incremental asynchronous block coordinate descent. Experiments show that CT-RIO converges from time offsets as large as 263 ms to sub-millisecond accuracy within 3 s, achieves 0.046 m and 1.8° RMSE, and improves accuracy by up to 60% under high-speed motion.

Demonstration Video. Submitted to IEEE Transactions on Robotics (TRO), 2026.Under Review -

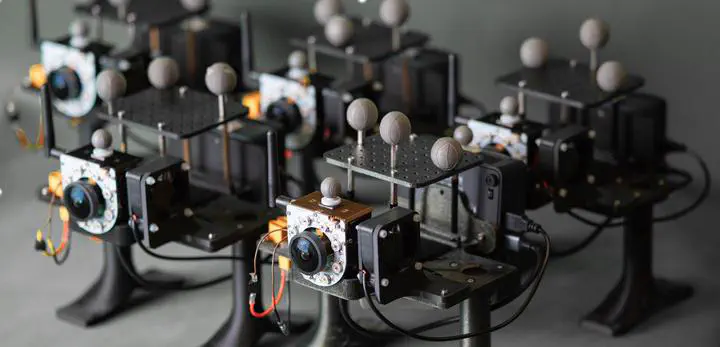

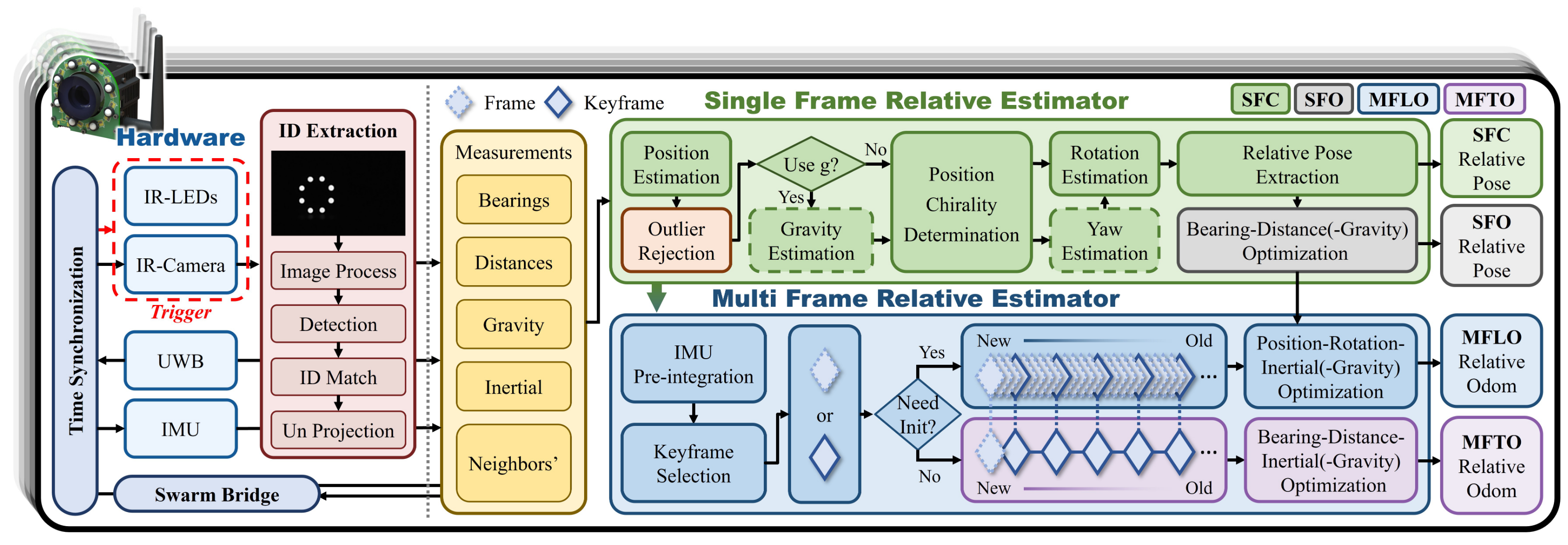

Hierarchical Cooperative Relative Localization via Closed-Form Solutions and Relative Kinematics

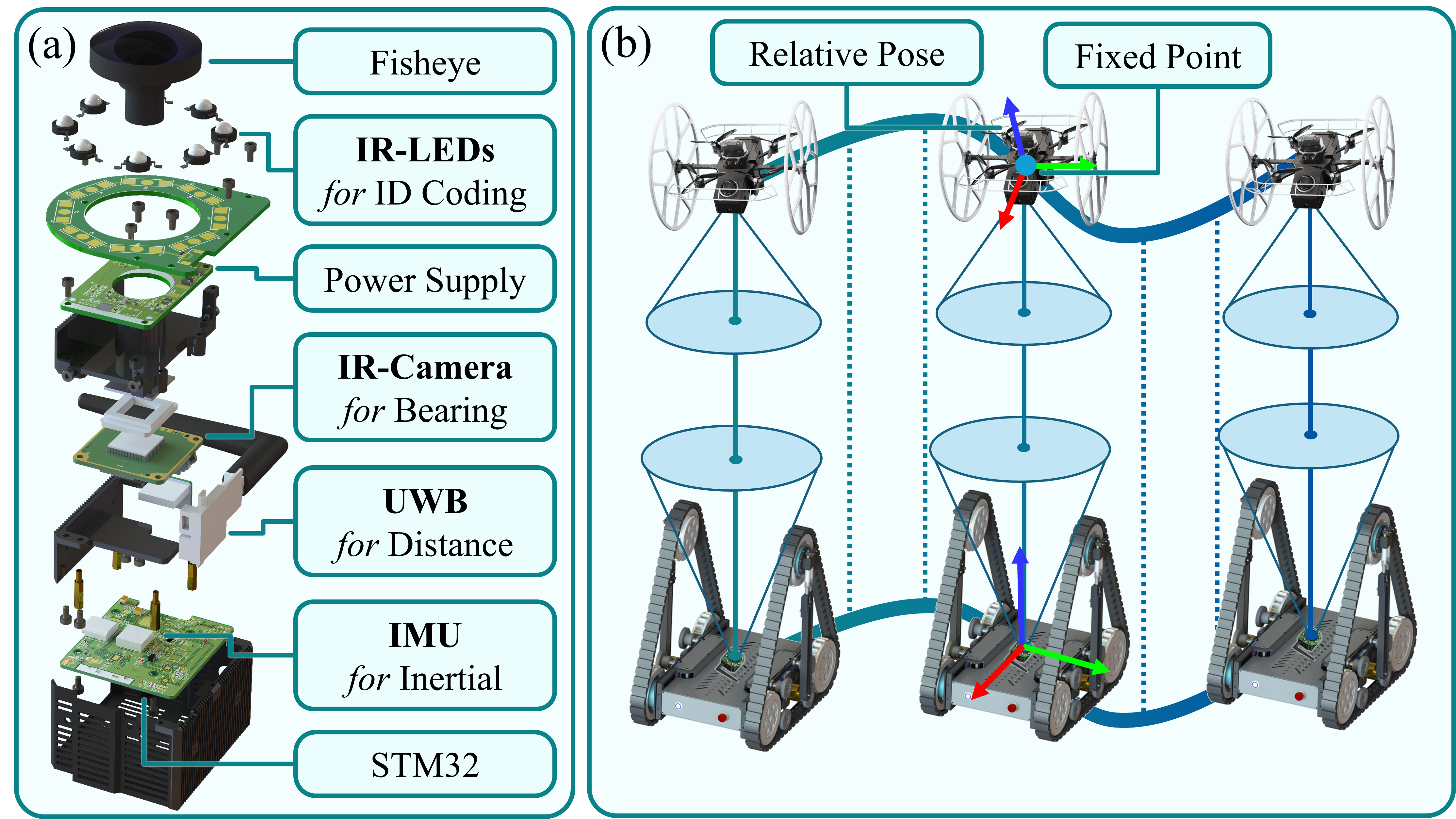

CREPES-X is a hierarchical cooperative relative localization system for autonomous multi-robot systems. It estimates relative poses directly from inter-robot observations, without relying on global information, environmental features, or pre-installed infrastructure. The system is designed to support robust cooperation in challenging scenarios with outliers, non-line-of-sight observations, and non-inertial motion.

The hardware integrates complementary sensing modalities, including IR LEDs, an IR camera, a UWB module, and an IMU. The LED-camera system provides bearing measurements with robot IDs, UWB provides inter-robot distances, and IMUs provide inertial measurements. Together, these measurements enable relative localization in a robocentric frame, independent of external localization systems.

The estimation framework follows a two-stage hierarchical design. A single-frame relative estimator first computes instant relative poses using closed-form solutions and robust bearing outlier rejection. A multi-frame relative estimator then refines the relative states over a sliding window by exploiting IMU pre-integration and robocentric relative kinematics through loosely- and tightly-coupled optimization.

Experiments show that CREPES-X remains robust under up to 90% bearing outliers and achieves real-world localization accuracy of 0.073 m and 1.817°. This project demonstrates how compact inter-robot sensing, closed-form geometric solvers, and relative kinematic optimization can be combined to build a fast, accurate, and robust relative localization system for robot swarms.

Submitted to IEEE Transactions on Robotics (TRO), 2025.Under Review -

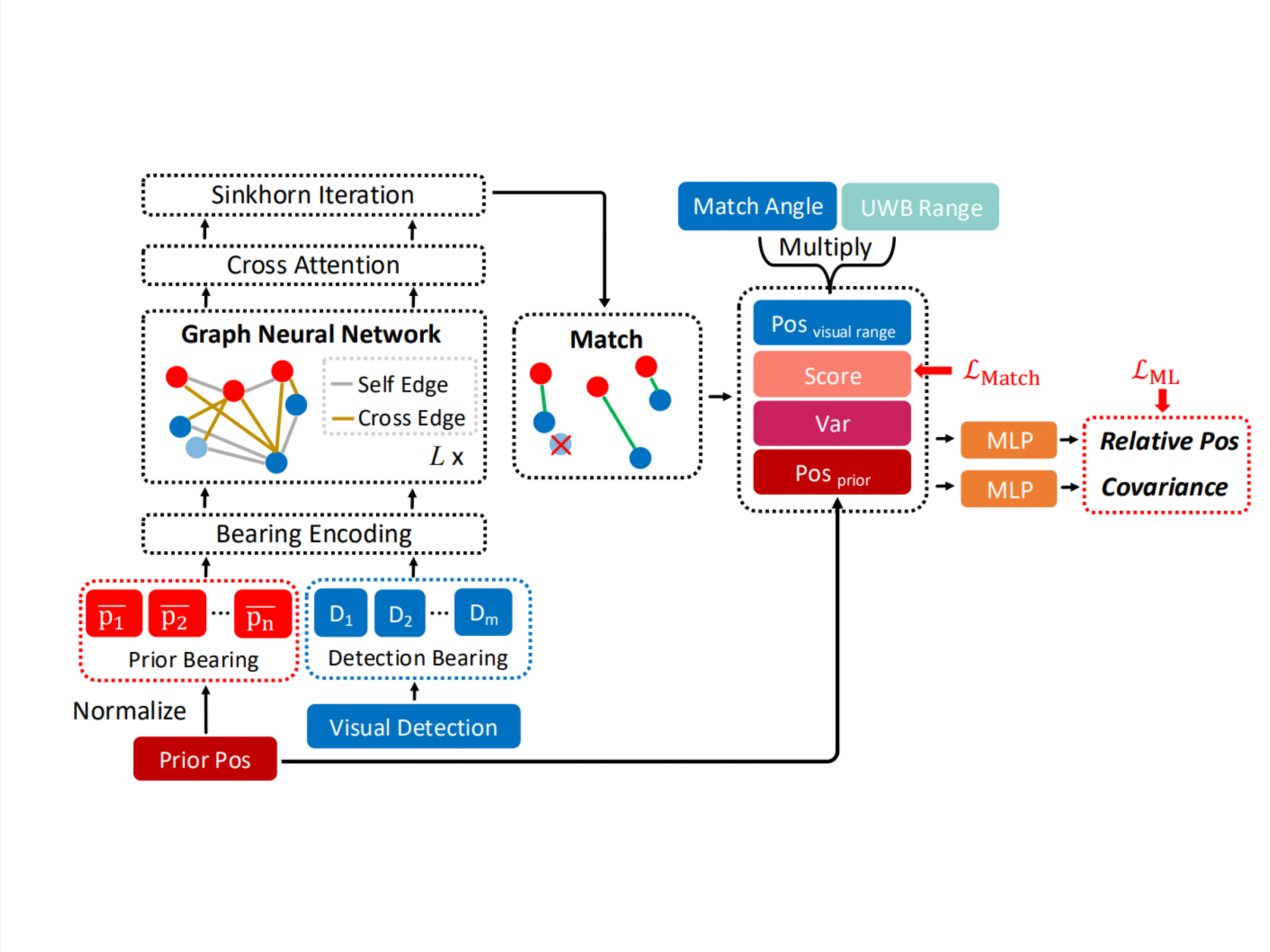

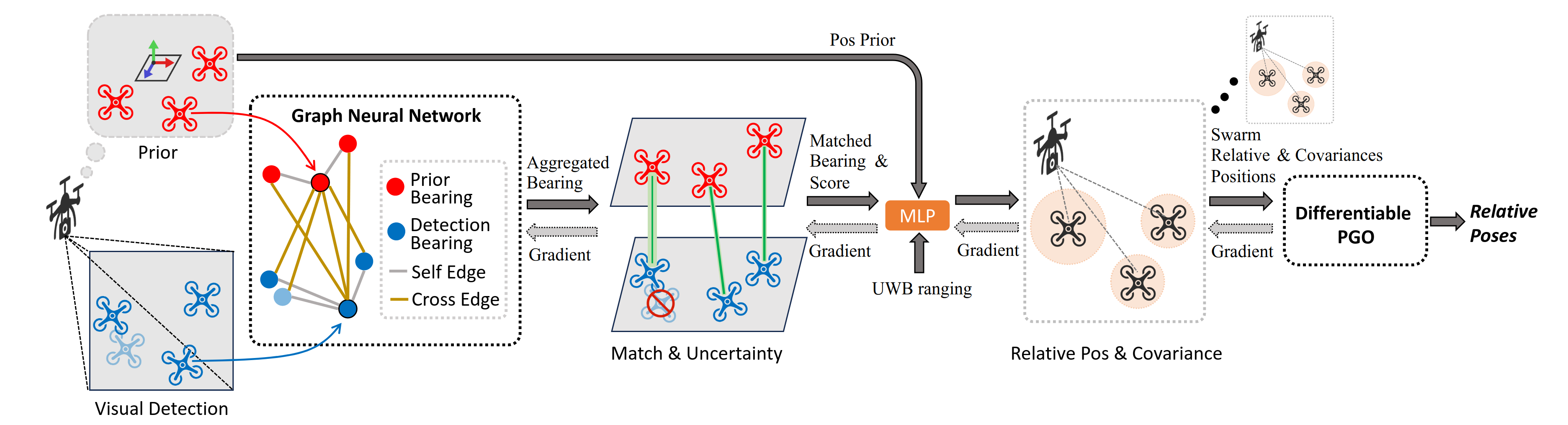

Mr. Virgil: Learning Multi-robot Visual-range Relative Localization

Mr. Virgil is an end-to-end learning framework for multi-robot visual-range relative localization. It tackles the data association problem in UWB-vision fusion systems, where visual detections of neighboring robots are anonymous and incorrect matches can seriously degrade localization accuracy.

We use a graph neural network with differentiable Sinkhorn matching to associate UWB-informed prior bearings with visual detections. The network predicts both relative positions and uncertainty estimates, enabling ambiguous observations to be treated as soft constraints rather than overconfident hard matches.

The learned measurements are fused by a differentiable pose graph optimization back-end and deployed in a decentralized system. Experiments show strong robustness to occlusion, false detections, varying robot numbers, and sim-to-real transfer, achieving 0.129 m RMSE in real-world non-line-of-sight scenarios.

IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2025.Oral Presentation -

Novel Design of Reconfigurable Tracked Robot with Geometry-Changing Tracks

This paper introdece CubeTrack. CubeTrack is a reconfigurable tracked robot with geometry-changing tracks, designed to achieve strong terrain traversability while maintaining a compact and mechanically efficient structure.

Its key innovation is a passive Quad-slider Elliptical Trammel Mechanism (Qs-ETM), which guides the planetary wheel along an elliptical trajectory and keeps the track tension nearly constant during reconfiguration. Combined with an end-wheel-driven actuation scheme using direct-drive motors, CubeTrack reduces the required flipper-driving torque by up to 68.3% and the flipper shear stress by up to 67.1% compared with conventional center-driven designs.

This was my first research project after joining my master’s laboratory. As a co-first author, I was responsible for the mechanical analysis of different structural designs, improving the reconfigurable tracked mechanism from mathematical modeling, and analyzing the robot’s kinematics and dynamics. This project gave me hands-on experience in mechanism design, force analysis, simulation validation, and real-world robotic system development.

Demonstration Video. IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2024.Oral Presentation

Projects

-

IROS

IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2025.IROS 2025 EXPO

IROS

IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2025.IROS 2025 EXPO

Services

Conference Reviewers

2025 IEEE International Conference on Robotics and Automation (ICRA) 2024 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS)

Journal Reviewers

Powered by Jekyll and Minimal Light theme.